Reinforcement Learning for Autonomous Navigation on Uncertain Terrain

Implemented a DeepRL (A3C) pipeline

Work done with Dr. M Vidyasagar FRS (IIT Hyderabad)

Problem

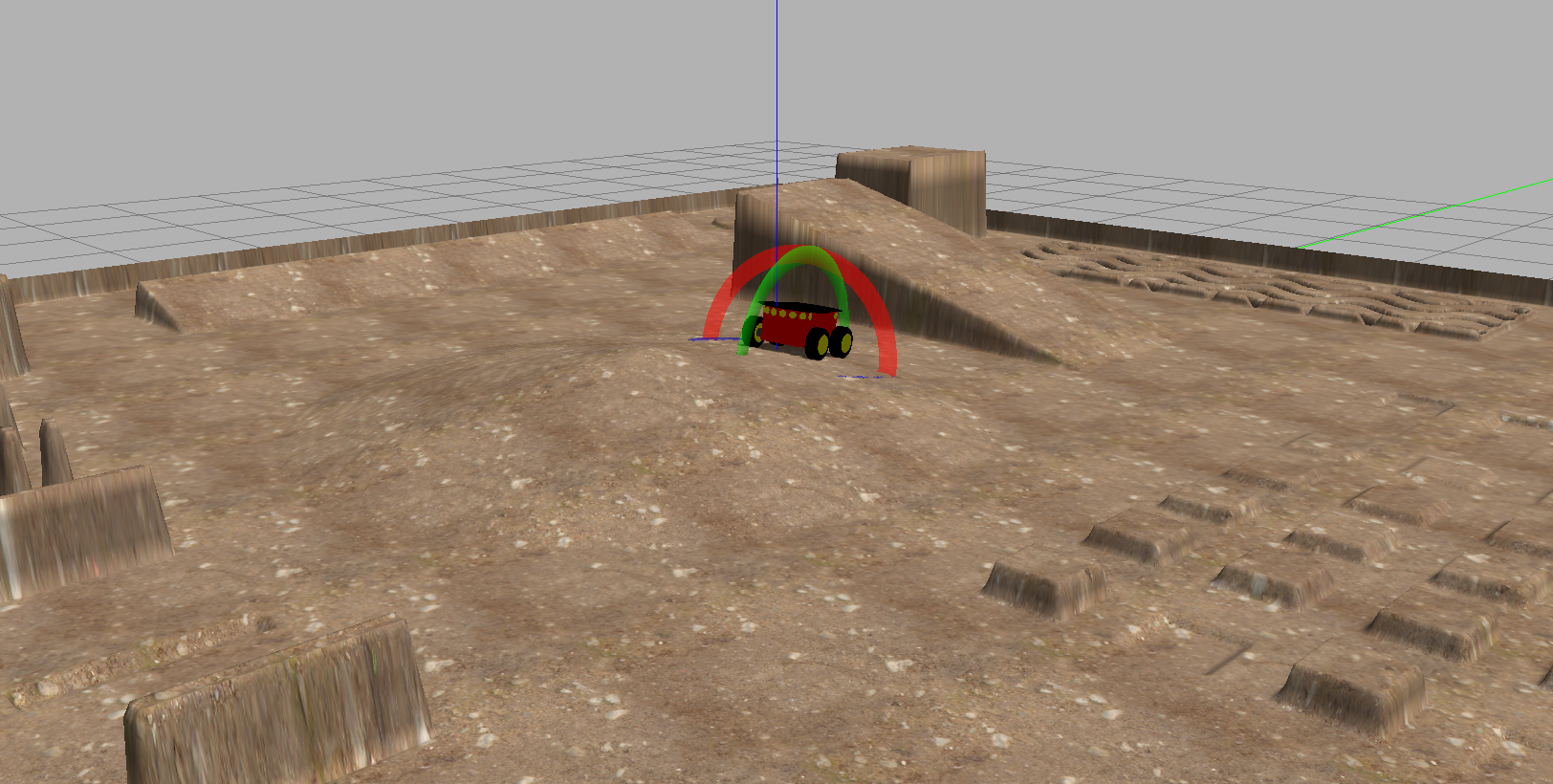

Online and local planning of unmanned ground vehicles (UGVs) for navigation on unstructured, uneven, and uncertain terrain. The problem is framed as a Deep RL task, where the agent must learn to navigate through cluttered environments with both static and dynamic obstacles while maintaining traversability on rough surfaces.

A3C Algorithm

The Asynchronous Advantage Actor-Critic (A3C) algorithm runs multiple parallel worker threads, each interacting with its own copy of the environment. Each worker computes local policy gradients and asynchronously updates the shared global network parameters.

The policy gradient with advantage estimation:

where the advantage function is estimated via Generalized Advantage Estimation (GAE):

The value loss uses the squared temporal difference error, and an entropy bonus \(H(\pi_\theta)\) encourages exploration:

Octomap-Based Occupancy Mapping

The environment is represented using Octomaps, a hierarchical 3D occupancy grid based on octrees. Octomaps provide efficient memory usage through multi-resolution representation and support probabilistic updates from noisy sensor data. The occupancy probability is updated via a log-odds formulation:

where \(L\) is the log-odds representation of occupancy probability for node \(n\) given sensor measurements \(z\).

Progressive Obstacle Curriculum

- Obstacles are introduced progressively during training, encouraging early exploration before the environment becomes cluttered

- Obstacle density is scheduled: starting sparse and increasing to the target density over the first 50% of training

- Both static obstacles (walls, rocks) and dynamic obstacles (moving objects) are incorporated

- User-defined obstacle placement allows testing specific navigation scenarios

Results

The progressive curriculum approach resulted in a 14% increase in success rate for the Pioneer robot navigating uneven terrain in Gazebo simulation, compared to training with a fixed obstacle density from the start.